随机算法 (Spring 2013)/Threshold and Concentration

Erdős–Rényi Random Graphs

Consider a graph [math]\displaystyle{ G(V,E) }[/math] which is randomly generated as:

- [math]\displaystyle{ |V|=n }[/math];

- [math]\displaystyle{ \forall \{u,v\}\in{V\choose 2} }[/math], [math]\displaystyle{ uv\in E }[/math] independently with probability [math]\displaystyle{ p }[/math].

Such graph is denoted as [math]\displaystyle{ G(n,p) }[/math]. This is called the Erdős–Rényi model or [math]\displaystyle{ G(n,p) }[/math] model for random graphs.

Informally, the presence of every edge of [math]\displaystyle{ G(n,p) }[/math] is determined by an independent coin flipping (with probability of HEADs [math]\displaystyle{ p }[/math]).

Monotone properties

A graph property is a predicate of graph which depends only on the structure of the graph.

Definition - Let [math]\displaystyle{ \mathcal{G}_n=2^{V\choose 2} }[/math], where [math]\displaystyle{ |V|=n }[/math], be the set of all possible graphs on [math]\displaystyle{ n }[/math] vertices. A graph property is a boolean function [math]\displaystyle{ P:\mathcal{G}_n\rightarrow\{0,1\} }[/math] which is invariant under permutation of vertices, i.e. [math]\displaystyle{ P(G)=P(H) }[/math] whenever [math]\displaystyle{ G }[/math] is isomorphic to [math]\displaystyle{ H }[/math].

We are interested in the monotone properties, i.e., those properties that adding edges will not change a graph from having the property to not having the property.

Definition - A graph property [math]\displaystyle{ P }[/math] is monotone if for any [math]\displaystyle{ G\subseteq H }[/math], both on [math]\displaystyle{ n }[/math] vertices, [math]\displaystyle{ G }[/math] having property [math]\displaystyle{ P }[/math] implies [math]\displaystyle{ H }[/math] having property [math]\displaystyle{ P }[/math].

By seeing the property as a function mapping a set of edges to a numerical value in [math]\displaystyle{ \{0,1\} }[/math], a monotone property is just a monotonically increasing set function.

Some examples of monotone graph properties:

- Hamiltonian;

- [math]\displaystyle{ k }[/math]-clique;

- contains a subgraph isomorphic to some [math]\displaystyle{ H }[/math];

- non-planar;

- chromatic number [math]\displaystyle{ \gt k }[/math] (i.e., not [math]\displaystyle{ k }[/math]-colorable);

- girth [math]\displaystyle{ \lt \ell }[/math].

From the last two properties, you can see another reason that the Erdős theorem is unintuitive.

Some examples of non-monotone graph properties:

- Eulerian;

- contains an induced subgraph isomorphic to some [math]\displaystyle{ H }[/math];

For all monotone graph properties, we have the following theorem.

Theorem - Let [math]\displaystyle{ P }[/math] be a monotone graph property. Suppose [math]\displaystyle{ G_1=G(n,p_1) }[/math], [math]\displaystyle{ G_2=G(n,p_2) }[/math], and [math]\displaystyle{ 0\le p_1\le p_2\le 1 }[/math]. Then

- [math]\displaystyle{ \Pr[P(G_1)]\le \Pr[P(G_2)] }[/math].

- Let [math]\displaystyle{ P }[/math] be a monotone graph property. Suppose [math]\displaystyle{ G_1=G(n,p_1) }[/math], [math]\displaystyle{ G_2=G(n,p_2) }[/math], and [math]\displaystyle{ 0\le p_1\le p_2\le 1 }[/math]. Then

Although the statement in the theorem looks very natural, it is difficult to evaluate the probability that a random graph has some property. However, the theorem can be very easily proved by using the idea of coupling, a proof technique in probability theory which compare two unrelated random variables by forcing them to be related.

Proof. For any [math]\displaystyle{ \{u,v\}\in{[n]\choose 2} }[/math], let [math]\displaystyle{ X_{\{u,v\}} }[/math] be independently and uniformly distributed over the continuous interval [math]\displaystyle{ [0,1] }[/math]. Let [math]\displaystyle{ uv\in G_1 }[/math] if and only if [math]\displaystyle{ X_{\{u,v\}}\in[0,p_1] }[/math] and let [math]\displaystyle{ uv\in G_2 }[/math] if and only if [math]\displaystyle{ X_{\{u,v\}}\in[0,p_2] }[/math].

It is obvious that [math]\displaystyle{ G_1\sim G(n,p_1)\, }[/math] and [math]\displaystyle{ G_2\sim G(n,p_2)\, }[/math]. For any [math]\displaystyle{ \{u,v\} }[/math], [math]\displaystyle{ uv\in G_1 }[/math] means that [math]\displaystyle{ X_{\{u,v\}}\in[0,p_1]\subseteq [0,p_2] }[/math], which implies that [math]\displaystyle{ uv\in G_2 }[/math]. Thus, [math]\displaystyle{ G_1\subseteq G_2 }[/math].

Since [math]\displaystyle{ P }[/math] is monotone, [math]\displaystyle{ P(G_1)=1 }[/math] implies [math]\displaystyle{ P(G_2) }[/math]. Thus,

- [math]\displaystyle{ \Pr[P(G_1)=1]\le \Pr[P(G_2)=1] }[/math].

- [math]\displaystyle{ \square }[/math]

Threshold phenomenon

One of the most fascinating phenomenon of random graphs is that for so many natural graph properties, the random graph [math]\displaystyle{ G(n,p) }[/math] suddenly changes from almost always not having the property to almost always having the property as [math]\displaystyle{ p }[/math] grows in a very small range.

A monotone graph property [math]\displaystyle{ P }[/math] is said to have the threshold [math]\displaystyle{ p(n) }[/math] if

- when [math]\displaystyle{ p\ll p(n) }[/math], [math]\displaystyle{ \Pr[P(G(n,p))]=0 }[/math] as [math]\displaystyle{ n\rightarrow\infty }[/math] (also called [math]\displaystyle{ G(n,p) }[/math] almost always does not have [math]\displaystyle{ P }[/math]); and

- when [math]\displaystyle{ p\gg p(n) }[/math], [math]\displaystyle{ \Pr[P(G(n,p))]=1 }[/math] as [math]\displaystyle{ n\rightarrow\infty }[/math] (also called [math]\displaystyle{ G(n,p) }[/math] almost always has [math]\displaystyle{ P }[/math]).

The classic method for proving the threshold is the so-called second moment method (Chebyshev's inequality).

Threshold for 4-clique

Theorem - The threshold for a random graph [math]\displaystyle{ G(n,p) }[/math] to contain a 4-clique is [math]\displaystyle{ p=n^{2/3} }[/math].

We formulate the problem as such. For any [math]\displaystyle{ 4 }[/math]-subset of vertices [math]\displaystyle{ S\in{V\choose 4} }[/math], let [math]\displaystyle{ X_S }[/math] be the indicator random variable such that

- [math]\displaystyle{ X_S= \begin{cases} 1 & S\mbox{ is a clique},\\ 0 & \mbox{otherwise}. \end{cases} }[/math]

Let [math]\displaystyle{ X=\sum_{S\in{V\choose 4}}X_S }[/math] be the total number of 4-cliques in [math]\displaystyle{ G }[/math].

It is sufficient to prove the following lemma.

Lemma - If [math]\displaystyle{ p=o(n^{-2/3}) }[/math], then [math]\displaystyle{ \Pr[X\ge 1]\rightarrow 0 }[/math] as [math]\displaystyle{ n\rightarrow\infty }[/math].

- If [math]\displaystyle{ p=\omega(n^{-2/3}) }[/math], then [math]\displaystyle{ \Pr[X\ge 1]\rightarrow 1 }[/math] as [math]\displaystyle{ n\rightarrow\infty }[/math].

Proof. The first claim is proved by the first moment (expectation and Markov's inequality) and the second claim is proved by the second moment method (Chebyshev's inequality).

Every 4-clique has 6 edges, thus for any [math]\displaystyle{ S\in{V\choose 4} }[/math],

- [math]\displaystyle{ \mathbf{E}[X_S]=\Pr[X_S=1]=p^6 }[/math].

By the linearity of expectation,

- [math]\displaystyle{ \mathbf{E}[X]=\sum_{S\in{V\choose 4}}\mathbf{E}[X_S]={n\choose 4}p^6 }[/math].

Applying Markov's inequality

- [math]\displaystyle{ \Pr[X\ge 1]\le \mathbf{E}[X]=O(n^4p^6)=o(1) }[/math], if [math]\displaystyle{ p=o(n^{-2/3}) }[/math].

The first claim is proved.

To prove the second claim, it is equivalent to show that [math]\displaystyle{ \Pr[X=0]=o(1) }[/math] if [math]\displaystyle{ p=\omega(n^{-2/3}) }[/math]. By the Chebyshev's inequality,

- [math]\displaystyle{ \Pr[X=0]\le\Pr[|X-\mathbf{E}[X]|\ge\mathbf{E}[X]]\le\frac{\mathbf{Var}[X]}{(\mathbf{E}[X])^2} }[/math],

where the variance is computed as

- [math]\displaystyle{ \mathbf{Var}[X]=\mathbf{Var}\left[\sum_{S\in{V\choose 4}}X_S\right]=\sum_{S\in{V\choose 4}}\mathbf{Var}[X_S]+\sum_{S,T\in{V\choose 4}, S\neq T}\mathbf{Cov}(X_S,X_T) }[/math].

For any [math]\displaystyle{ S\in{V\choose 4} }[/math],

- [math]\displaystyle{ \mathbf{Var}[X_S]=\mathbf{E}[X_S^2]-\mathbf{E}[X_S]^2\le \mathbf{E}[X_S^2]=\mathbf{E}[X_S]=p^6 }[/math]. Thus the first term of above formula is [math]\displaystyle{ \sum_{S\in{V\choose 4}}\mathbf{Var}[X_S]=O(n^4p^6) }[/math].

We now compute the covariances. For any [math]\displaystyle{ S,T\in{V\choose 4} }[/math] that [math]\displaystyle{ S\neq T }[/math]:

- Case.1: [math]\displaystyle{ |S\cap T|\le 1 }[/math], so [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math] do not share any edges. [math]\displaystyle{ X_S }[/math] and [math]\displaystyle{ X_T }[/math] are independent, thus [math]\displaystyle{ \mathbf{Cov}(X_S,X_T)=0 }[/math].

- Case.2: [math]\displaystyle{ |S\cap T|= 2 }[/math], so [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math] share an edge. Since [math]\displaystyle{ |S\cup T|=6 }[/math], there are [math]\displaystyle{ {n\choose 6}=O(n^6) }[/math] pairs of such [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math].

- [math]\displaystyle{ \mathbf{Cov}(X_S,X_T)=\mathbf{E}[X_SX_T]-\mathbf{E}[X_S]\mathbf{E}[X_T]\le\mathbf{E}[X_SX_T]=\Pr[X_S=1\wedge X_T=1]=p^{11} }[/math]

- since there are 11 edges in the union of two 4-cliques that share a common edge. The contribution of these pairs is [math]\displaystyle{ O(n^6p^{11}) }[/math].

- Case.2: [math]\displaystyle{ |S\cap T|= 3 }[/math], so [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math] share a triangle. Since [math]\displaystyle{ |S\cup T|=5 }[/math], there are [math]\displaystyle{ {n\choose 5}=O(n^5) }[/math] pairs of such [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math]. By the same argument,

- [math]\displaystyle{ \mathbf{Cov}(X_S,X_T)\le\Pr[X_S=1\wedge X_T=1]=p^{9} }[/math]

- since there are 9 edges in the union of two 4-cliques that share a triangle. The contribution of these pairs is [math]\displaystyle{ O(n^5p^{9}) }[/math].

Putting all these together,

- [math]\displaystyle{ \mathbf{Var}[X]=O(n^4p^6+n^6p^{11}+n^5p^{9}). }[/math]

And

- [math]\displaystyle{ \Pr[X=0]\le\frac{\mathbf{Var}[X]}{(\mathbf{E}[X])^2}=O(n^{-4}p^{-6}+n^{-2}p^{-1}+n^{-3}p^{-3}) }[/math],

which is [math]\displaystyle{ o(1) }[/math] if [math]\displaystyle{ p=\omega(n^{-2/3}) }[/math]. The second claim is also proved.

- [math]\displaystyle{ \square }[/math]

Threshold for balanced subgraphs

The above theorem can be generalized to any "balanced" subgraphs.

Definition - The density of a graph [math]\displaystyle{ G(V,E) }[/math], denoted [math]\displaystyle{ \rho(G)\, }[/math], is defined as [math]\displaystyle{ \rho(G)=\frac{|E|}{|V|} }[/math].

- A graph [math]\displaystyle{ G(V,E) }[/math] is balanced if [math]\displaystyle{ \rho(H)\le \rho(G) }[/math] for all subgraphs [math]\displaystyle{ H }[/math] of [math]\displaystyle{ G }[/math].

Cliques are balanced, because [math]\displaystyle{ \frac{{k\choose 2}}{k}\le \frac{{n\choose 2}}{n} }[/math] for any [math]\displaystyle{ k\le n }[/math]. The threshold for 4-clique is a direct corollary of the following general theorem.

Theorem (Erdős–Rényi 1960) - Let [math]\displaystyle{ H }[/math] be a balanced graph with [math]\displaystyle{ k }[/math] vertices and [math]\displaystyle{ \ell }[/math] edges. The threshold for the property that a random graph [math]\displaystyle{ G(n,p) }[/math] contains a (not necessarily induced) subgraph isomorphic to [math]\displaystyle{ H }[/math] is [math]\displaystyle{ p=n^{-k/\ell}\, }[/math].

Sketch of proof. For any [math]\displaystyle{ S\in{V\choose k} }[/math], let [math]\displaystyle{ X_S }[/math] indicate whether [math]\displaystyle{ G_S }[/math] (the subgraph of [math]\displaystyle{ G }[/math] induced by [math]\displaystyle{ S }[/math]) contain a subgraph [math]\displaystyle{ H }[/math]. Then

- [math]\displaystyle{ p^{\ell}\le\mathbf{E}[X_S]\le k!p^{\ell} }[/math], since there are at most [math]\displaystyle{ k! }[/math] ways to match the substructure.

Note that [math]\displaystyle{ k }[/math] does not depend on [math]\displaystyle{ n }[/math]. Thus, [math]\displaystyle{ \mathbf{E}[X_S]=\Theta(p^{\ell}) }[/math]. Let [math]\displaystyle{ X=\sum_{S\in{V\choose k}}X_S }[/math] be the number of [math]\displaystyle{ H }[/math]-subgraphs.

- [math]\displaystyle{ \mathbf{E}[X]=\Theta(n^kp^{\ell}) }[/math].

By Markov's inequality, [math]\displaystyle{ \Pr[X\ge 1]\le \mathbf{E}[X]=\Theta(n^kp^{\ell}) }[/math] which is [math]\displaystyle{ o(1) }[/math] when [math]\displaystyle{ p\ll n^{-\ell/k} }[/math].

By Chebyshev's inequality, [math]\displaystyle{ \Pr[X=0]\le \frac{\mathbf{Var}[X]}{\mathbf{E}[X]^2} }[/math] where

- [math]\displaystyle{ \mathbf{Var}[X]=\sum_{S\in{V\choose k}}\mathbf{Var}[X_S]+\sum_{S\neq T}\mathbf{Cov}(X_S,X_T) }[/math].

The first term [math]\displaystyle{ \sum_{S\in{V\choose k}}\mathbf{Var}[X_S]\le \sum_{S\in{V\choose k}}\mathbf{E}[X_S^2]= \sum_{S\in{V\choose k}}\mathbf{E}[X_S]=\mathbf{E}[X]=\Theta(n^kp^{\ell}) }[/math].

For the covariances, [math]\displaystyle{ \mathbf{Cov}(X_S,X_T)\neq 0 }[/math] only if [math]\displaystyle{ |S\cap T|=i }[/math] for [math]\displaystyle{ 2\le i\le k-1 }[/math]. Note that [math]\displaystyle{ |S\cap T|=i }[/math] implies that [math]\displaystyle{ |S\cup T|=2k-i }[/math]. And for balanced [math]\displaystyle{ H }[/math], the number of edges of interest in [math]\displaystyle{ S }[/math] and [math]\displaystyle{ T }[/math] is [math]\displaystyle{ 2\ell-i\rho(H_{S\cap T})\ge 2\ell-i\rho(H)=2\ell-i\ell/k }[/math]. Thus, [math]\displaystyle{ \mathbf{Cov}(X_S,X_T)\le\mathbf{E}[X_SX_T]\le p^{2\ell-i\ell/k} }[/math]. And,

- [math]\displaystyle{ \sum_{S\neq T}\mathbf{Cov}(X_S,X_T)=\sum_{i=2}^{k-1}O(n^{2k-i}p^{2\ell-i\ell/k}) }[/math]

Therefore, when [math]\displaystyle{ p\gg n^{-\ell/k} }[/math],

- [math]\displaystyle{ \Pr[X=0]\le \frac{\mathbf{Var}[X]}{\mathbf{E}[X]^2}\le \frac{\Theta(n^kp^{\ell})+\sum_{i=2}^{k-1}O(n^{2k-i}p^{2\ell-i\ell/k})}{\Theta(n^{2k}p^{2\ell})}=\Theta(n^{-k}p^{-\ell})+\sum_{i=2}^{k-1}O(n^{-i}p^{-i\ell/k})=o(1) }[/math].

- [math]\displaystyle{ \square }[/math]

The Chernoff Bound

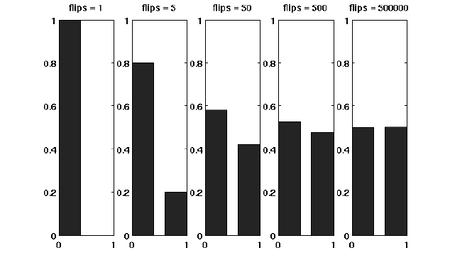

Suppose that we have a fair coin. If we toss it once, then the outcome is completely unpredictable. But if we toss it, say for 1000 times, then the number of HEADs is very likely to be around 500. This striking phenomenon, illustrated in the right figure, is called the concentration. The Chernoff bound captures the concentration of independent trials.

The Chernoff bound is also a tail bound for the sum of independent random variables which may give us exponentially sharp bounds.

Before proving the Chernoff bound, we should talk about the moment generating functions.

Moment generating functions

The more we know about the moments of a random variable [math]\displaystyle{ X }[/math], the more information we would have about [math]\displaystyle{ X }[/math]. There is a so-called moment generating function, which "packs" all the information about the moments of [math]\displaystyle{ X }[/math] into one function.

Definition - The moment generating function of a random variable [math]\displaystyle{ X }[/math] is defined as [math]\displaystyle{ \mathbf{E}\left[\mathrm{e}^{\lambda X}\right] }[/math] where [math]\displaystyle{ \lambda }[/math] is the parameter of the function.

By Taylor's expansion and the linearity of expectations,

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[\mathrm{e}^{\lambda X}\right] &= \mathbf{E}\left[\sum_{k=0}^\infty\frac{\lambda^k}{k!}X^k\right]\\ &=\sum_{k=0}^\infty\frac{\lambda^k}{k!}\mathbf{E}\left[X^k\right] \end{align} }[/math]

The moment generating function [math]\displaystyle{ \mathbf{E}\left[\mathrm{e}^{\lambda X}\right] }[/math] is a function of [math]\displaystyle{ \lambda }[/math].

The Chernoff bound

The Chernoff bounds are exponentially sharp tail inequalities for the sum of independent trials. The bounds are obtained by applying Markov's inequality to the moment generating function of the sum of independent trials, with some appropriate choice of the parameter [math]\displaystyle{ \lambda }[/math].

Chernoff bound (the upper tail) - Let [math]\displaystyle{ X=\sum_{i=1}^n X_i }[/math], where [math]\displaystyle{ X_1, X_2, \ldots, X_n }[/math] are independent Poisson trials. Let [math]\displaystyle{ \mu=\mathbf{E}[X] }[/math].

- Then for any [math]\displaystyle{ \delta\gt 0 }[/math],

- [math]\displaystyle{ \Pr[X\ge (1+\delta)\mu]\le\left(\frac{e^{\delta}}{(1+\delta)^{(1+\delta)}}\right)^{\mu}. }[/math]

Proof. For any [math]\displaystyle{ \lambda\gt 0 }[/math], [math]\displaystyle{ X\ge (1+\delta)\mu }[/math] is equivalent to that [math]\displaystyle{ e^{\lambda X}\ge e^{\lambda (1+\delta)\mu} }[/math], thus - [math]\displaystyle{ \begin{align} \Pr[X\ge (1+\delta)\mu] &= \Pr\left[e^{\lambda X}\ge e^{\lambda (1+\delta)\mu}\right]\\ &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1+\delta)\mu}}, \end{align} }[/math]

where the last step follows by Markov's inequality.

Computing the moment generating function [math]\displaystyle{ \mathbf{E}[e^{\lambda X}] }[/math]:

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X}\right] &= \mathbf{E}\left[e^{\lambda \sum_{i=1}^n X_i}\right]\\ &= \mathbf{E}\left[\prod_{i=1}^n e^{\lambda X_i}\right]\\ &= \prod_{i=1}^n \mathbf{E}\left[e^{\lambda X_i}\right]. & (\mbox{for independent random variables}) \end{align} }[/math]

Let [math]\displaystyle{ p_i=\Pr[X_i=1] }[/math] for [math]\displaystyle{ i=1,2,\ldots,n }[/math]. Then,

- [math]\displaystyle{ \mu=\mathbf{E}[X]=\mathbf{E}\left[\sum_{i=1}^n X_i\right]=\sum_{i=1}^n\mathbf{E}[X_i]=\sum_{i=1}^n p_i }[/math].

We bound the moment generating function for each individual [math]\displaystyle{ X_i }[/math] as follows.

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X_i}\right] &= p_i\cdot e^{\lambda\cdot 1}+(1-p_i)\cdot e^{\lambda\cdot 0}\\ &= 1+p_i(e^\lambda -1)\\ &\le e^{p_i(e^\lambda-1)}, \end{align} }[/math]

where in the last step we apply the Taylor's expansion so that [math]\displaystyle{ e^y\ge 1+y }[/math] where [math]\displaystyle{ y=p_i(e^\lambda-1)\ge 0 }[/math]. (By doing this, we can transform the product to the sum of [math]\displaystyle{ p_i }[/math], which is [math]\displaystyle{ \mu }[/math].)

Therefore,

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X}\right] &= \prod_{i=1}^n \mathbf{E}\left[e^{\lambda X_i}\right]\\ &\le \prod_{i=1}^n e^{p_i(e^\lambda-1)}\\ &= \exp\left(\sum_{i=1}^n p_i(e^{\lambda}-1)\right)\\ &= e^{(e^\lambda-1)\mu}. \end{align} }[/math]

Thus, we have shown that for any [math]\displaystyle{ \lambda\gt 0 }[/math],

- [math]\displaystyle{ \begin{align} \Pr[X\ge (1+\delta)\mu] &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1+\delta)\mu}}\\ &\le \frac{e^{(e^\lambda-1)\mu}}{e^{\lambda (1+\delta)\mu}}\\ &= \left(\frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}}\right)^\mu \end{align} }[/math].

For any [math]\displaystyle{ \delta\gt 0 }[/math], we can let [math]\displaystyle{ \lambda=\ln(1+\delta)\gt 0 }[/math] to get

- [math]\displaystyle{ \Pr[X\ge (1+\delta)\mu]\le\left(\frac{e^{\delta}}{(1+\delta)^{(1+\delta)}}\right)^{\mu}. }[/math]

- [math]\displaystyle{ \square }[/math]

The idea of the proof is actually quite clear: we apply Markov's inequality to [math]\displaystyle{ e^{\lambda X} }[/math] and for the rest, we just estimate the moment generating function [math]\displaystyle{ \mathbf{E}[e^{\lambda X}] }[/math]. To make the bound as tight as possible, we minimized the [math]\displaystyle{ \frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}} }[/math] by setting [math]\displaystyle{ \lambda=\ln(1+\delta) }[/math], which can be justified by taking derivatives of [math]\displaystyle{ \frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}} }[/math].

We then proceed to the lower tail, the probability that the random variable deviates below the mean value:

Chernoff bound (the lower tail) - Let [math]\displaystyle{ X=\sum_{i=1}^n X_i }[/math], where [math]\displaystyle{ X_1, X_2, \ldots, X_n }[/math] are independent Poisson trials. Let [math]\displaystyle{ \mu=\mathbf{E}[X] }[/math].

- Then for any [math]\displaystyle{ 0\lt \delta\lt 1 }[/math],

- [math]\displaystyle{ \Pr[X\le (1-\delta)\mu]\le\left(\frac{e^{-\delta}}{(1-\delta)^{(1-\delta)}}\right)^{\mu}. }[/math]

Proof. For any [math]\displaystyle{ \lambda\lt 0 }[/math], by the same analysis as in the upper tail version, - [math]\displaystyle{ \begin{align} \Pr[X\le (1-\delta)\mu] &= \Pr\left[e^{\lambda X}\ge e^{\lambda (1-\delta)\mu}\right]\\ &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1-\delta)\mu}}\\ &\le \left(\frac{e^{(e^\lambda-1)}}{e^{\lambda (1-\delta)}}\right)^\mu. \end{align} }[/math]

For any [math]\displaystyle{ 0\lt \delta\lt 1 }[/math], we can let [math]\displaystyle{ \lambda=\ln(1-\delta)\lt 0 }[/math] to get

- [math]\displaystyle{ \Pr[X\ge (1-\delta)\mu]\le\left(\frac{e^{-\delta}}{(1-\delta)^{(1-\delta)}}\right)^{\mu}. }[/math]

- [math]\displaystyle{ \square }[/math]

Some useful special forms of the bounds can be derived directly from the above general forms of the bounds. We now know better why we say that the bounds are exponentially sharp.

Useful forms of the Chernoff bound - Let [math]\displaystyle{ X=\sum_{i=1}^n X_i }[/math], where [math]\displaystyle{ X_1, X_2, \ldots, X_n }[/math] are independent Poisson trials. Let [math]\displaystyle{ \mu=\mathbf{E}[X] }[/math]. Then

- 1. for [math]\displaystyle{ 0\lt \delta\le 1 }[/math],

- [math]\displaystyle{ \Pr[X\ge (1+\delta)\mu]\lt \exp\left(-\frac{\mu\delta^2}{3}\right); }[/math]

- [math]\displaystyle{ \Pr[X\le (1-\delta)\mu]\lt \exp\left(-\frac{\mu\delta^2}{2}\right); }[/math]

- 2. for [math]\displaystyle{ t\ge 2e\mu }[/math],

- [math]\displaystyle{ \Pr[X\ge t]\le 2^{-t}. }[/math]

Proof. To obtain the bounds in (1), we need to show that for [math]\displaystyle{ 0\lt \delta\lt 1 }[/math], [math]\displaystyle{ \frac{e^{\delta}}{(1+\delta)^{(1+\delta)}}\le e^{-\delta^2/3} }[/math] and [math]\displaystyle{ \frac{e^{-\delta}}{(1-\delta)^{(1-\delta)}}\le e^{-\delta^2/2} }[/math]. We can verify both inequalities by standard analysis techniques. To obtain the bound in (2), let [math]\displaystyle{ t=(1+\delta)\mu }[/math]. Then [math]\displaystyle{ \delta=t/\mu-1\ge 2e-1 }[/math]. Hence,

- [math]\displaystyle{ \begin{align} \Pr[X\ge(1+\delta)\mu] &\le \left(\frac{e^\delta}{(1+\delta)^{(1+\delta)}}\right)^\mu\\ &\le \left(\frac{e}{1+\delta}\right)^{(1+\delta)\mu}\\ &\le \left(\frac{e}{2e}\right)^t\\ &\le 2^{-t} \end{align} }[/math]

- [math]\displaystyle{ \square }[/math]

Balls into bins, revisited

Throwing [math]\displaystyle{ m }[/math] balls uniformly and independently to [math]\displaystyle{ n }[/math] bins, what is the maximum load of all bins with high probability? In the last class, we gave an analysis of this problem by using a counting argument.

Now we give a more "advanced" analysis by using Chernoff bounds.

For any [math]\displaystyle{ i\in[n] }[/math] and [math]\displaystyle{ j\in[m] }[/math], let [math]\displaystyle{ X_{ij} }[/math] be the indicator variable for the event that ball [math]\displaystyle{ j }[/math] is thrown to bin [math]\displaystyle{ i }[/math]. Obviously

- [math]\displaystyle{ \mathbf{E}[X_{ij}]=\Pr[\mbox{ball }j\mbox{ is thrown to bin }i]=\frac{1}{n} }[/math]

Let [math]\displaystyle{ Y_i=\sum_{j\in[m]}X_{ij} }[/math] be the load of bin [math]\displaystyle{ i }[/math].

Then the expected load of bin [math]\displaystyle{ i }[/math] is

[math]\displaystyle{ (*)\qquad \mu=\mathbf{E}[Y_i]=\mathbf{E}\left[\sum_{j\in[m]}X_{ij}\right]=\sum_{j\in[m]}\mathbf{E}[X_{ij}]=m/n. }[/math]

For the case [math]\displaystyle{ m=n }[/math], it holds that [math]\displaystyle{ \mu=1 }[/math]

Note that [math]\displaystyle{ Y_i }[/math] is a sum of [math]\displaystyle{ m }[/math] mutually independent indicator variable. Applying Chernoff bound, for any particular bin [math]\displaystyle{ i\in[n] }[/math],

- [math]\displaystyle{ \Pr[Y_i\gt (1+\delta)\mu] \le \left(\frac{e^{\delta}}{(1+\delta)^{1+\delta}}\right)^\mu. }[/math]

When [math]\displaystyle{ m=n }[/math]

When [math]\displaystyle{ m=n }[/math], [math]\displaystyle{ \mu=1 }[/math]. Write [math]\displaystyle{ c=1+\delta }[/math]. The above bound can be written as

- [math]\displaystyle{ \Pr[Y_i\gt c] \le \frac{e^{c-1}}{c^c}. }[/math]

Let [math]\displaystyle{ c=\frac{e\ln n}{\ln\ln n} }[/math], we evaluate [math]\displaystyle{ \frac{e^{c-1}}{c^c} }[/math] by taking logarithm to its reciprocal.

- [math]\displaystyle{ \begin{align} \ln\left(\frac{c^c}{e^{c-1}}\right) &= c\ln c-c+1\\ &= c(\ln c-1)+1\\ &= \frac{e\ln n}{\ln\ln n}\left(\ln\ln n-\ln\ln\ln n\right)+1\\ &\ge \frac{e\ln n}{\ln\ln n}\cdot\frac{2}{e}\ln\ln n+1\\ &\ge 2\ln n. \end{align} }[/math]

Thus,

- [math]\displaystyle{ \Pr\left[Y_i\gt \frac{e\ln n}{\ln\ln n}\right] \le \frac{1}{n^2}. }[/math]

Applying the union bound, the probability that there exists a bin with load [math]\displaystyle{ \gt 12\ln n }[/math] is

- [math]\displaystyle{ n\cdot \Pr\left[Y_1\gt \frac{e\ln n}{\ln\ln n}\right] \le \frac{1}{n} }[/math].

Therefore, for [math]\displaystyle{ m=n }[/math], with high probability, the maximum load is [math]\displaystyle{ O\left(\frac{e\ln n}{\ln\ln n}\right) }[/math].

For larger [math]\displaystyle{ m }[/math]

When [math]\displaystyle{ m\ge n\ln n }[/math], then according to [math]\displaystyle{ (*) }[/math], [math]\displaystyle{ \mu=\frac{m}{n}\ge \ln n }[/math]

We can apply an easier form of the Chernoff bounds,

- [math]\displaystyle{ \Pr[Y_i\ge 2e\mu]\le 2^{-2e\mu}\le 2^{-2e\ln n}\lt \frac{1}{n^2}. }[/math]

By the union bound, the probability that there exists a bin with load [math]\displaystyle{ \ge 2e\frac{m}{n} }[/math] is,

- [math]\displaystyle{ n\cdot \Pr\left[Y_1\gt 2e\frac{m}{n}\right] = n\cdot \Pr\left[Y_1\gt 2e\mu\right]\le \frac{1}{n} }[/math].

Therefore, for [math]\displaystyle{ m\ge n\ln n }[/math], with high probability, the maximum load is [math]\displaystyle{ O\left(\frac{m}{n}\right) }[/math].