Randomized Algorithms (Spring 2010)/Tail inequalities

When applying probabilistic analysis, we often want a bound in form of [math]\displaystyle{ \Pr[X\ge t]\lt \epsilon }[/math] for some random variable [math]\displaystyle{ X }[/math] (think that [math]\displaystyle{ X }[/math] is a cost such as running time of a randomized algorithm). We call this a tail bound, or a tail inequality.

In principle, we can bound [math]\displaystyle{ \Pr[X\ge t] }[/math] by directly estimating the probability of the event that [math]\displaystyle{ X\ge t }[/math]. Besides this ad hoc way, we want to have some general tools which estimate tail probabilities based on certain information regarding the random variables.

Markov's inequality

One of the most natural information about a random variable is its expectation. Markov's inequality gives a tail bound using only the information of expectation.

Theorem (Markov's Inequality):

|

Proof: Let [math]\displaystyle{ Y }[/math] be the indicator such that

- [math]\displaystyle{ \begin{align} Y &= \begin{cases} 1 & \mbox{if }X\ge t,\\ 0 & \mbox{otherwise.} \end{cases} \end{align} }[/math]

It holds that [math]\displaystyle{ Y\le\frac{X}{t} }[/math]. Since [math]\displaystyle{ Y }[/math] is 0-1 valued, [math]\displaystyle{ \mathbf{E}[Y]=\Pr[Y=1]=\Pr[X\ge t] }[/math]. Therefore,

- [math]\displaystyle{ \Pr[X\ge t] = \mathbf{E}[Y] \le \mathbf{E}\left[\frac{X}{t}\right] =\frac{\mathbf{E}[X]}{t}. }[/math]

[math]\displaystyle{ \square }[/math]

The Moments Technique

Markov's inequality is the best we can get if all we know about the random variable is its expectation. Additional information about a random variable is expressed in terms of its moments.

Definition (moments):

|

Variance

Given the first and the second moments, the variance of the ramdom variable can be computed.

Definition (variance):

|

Chebyshev's inequality

With the information of the expectation and variance of a random variable, one can derive a stronger tail bound known as Chebyshev's Inequality.

Theorem (Chebyshev's Inequality):

|

Proof: Observe that

- [math]\displaystyle{ \Pr[|X-\mathbf{E}[X]| \ge t] = \Pr[(X-\mathbf{E}[X])^2 \ge t^2]. }[/math]

Since [math]\displaystyle{ (X-\mathbf{E}[X])^2 }[/math] is a nonnegative random variable, we can apply Markov's inequality, such that

- [math]\displaystyle{ \Pr[(X-\mathbf{E}[X])^2 \ge t^2] \le \frac{\mathbf{E}[(X-\mathbf{E}[X])^2]}{t^2} =\frac{\mathbf{Var}[X]}{t^2}. }[/math]

[math]\displaystyle{ \square }[/math]

Higher moments

The more we know about the moments, the more information we have about the distribution, hence in principle, we can get tighter tail bounds. This technique is called the [math]\displaystyle{ k }[/math]th moment method.

We know that the [math]\displaystyle{ k }[/math]th moment is [math]\displaystyle{ \mathbf{E}[X^k] }[/math]. More generally, the [math]\displaystyle{ k }[/math]th moment about [math]\displaystyle{ c }[/math] is [math]\displaystyle{ \mathbf{E}[(X-c)^k] }[/math]. The central moment of [math]\displaystyle{ X }[/math], denoted [math]\displaystyle{ \mu_k[X] }[/math], is defined as [math]\displaystyle{ \mu_k[X]=\mathbf{E}[(X-\mathbf{E}[X])^k] }[/math]. So the variance is just the second central moment [math]\displaystyle{ \mu_2[X] }[/math].

The [math]\displaystyle{ k }[/math]th moment method is stated by the following theorem.

Theorem (the [math]\displaystyle{ k }[/math]th moment method):

|

Proof: Apply Markov's inequality to [math]\displaystyle{ (X-\mathbf{E}[X])^k }[/math].

[math]\displaystyle{ \square }[/math]

How about the odd [math]\displaystyle{ k }[/math]? For odd [math]\displaystyle{ k }[/math], we should apply Markov's inequality to [math]\displaystyle{ |X-\mathbf{E}[X]|^k }[/math], but estimating expectations of absolute values can be hard.

Select the Median

The selection problem is the problem of finding the [math]\displaystyle{ k }[/math]th smallest element in a set [math]\displaystyle{ S }[/math]. A typical case of selection problem is finding the median.

Definition

|

The median can be found in [math]\displaystyle{ O(n\log n) }[/math] time by sorting. There is a linear-time deterministic algorithm, "median of medians" algorithm, which is quite sophisticated. Here we introduce a much simpler randomized algorithm which also runs in linear time.

Randomized median algorithm

The idea of this algorithm is random sampling. For a set [math]\displaystyle{ S }[/math], let [math]\displaystyle{ m\in S }[/math] denote the median. We observe that if we can find two elements [math]\displaystyle{ d,u\in S }[/math] satisfying the following properties:

- The median is between [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] in the sorted order, i.e. [math]\displaystyle{ d\le m\le u }[/math];

- The total number of elements between [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] is small, specially for [math]\displaystyle{ C=\{x\in S\mid d\le x\le u\} }[/math], [math]\displaystyle{ |C|=o(n/\log n) }[/math].

Provided [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] with these two properties, within linear time, we can compute the ranks of [math]\displaystyle{ d }[/math] in [math]\displaystyle{ S }[/math], construct [math]\displaystyle{ C }[/math], and sort [math]\displaystyle{ C }[/math]. Therefore, the median [math]\displaystyle{ m }[/math] of [math]\displaystyle{ S }[/math] can be picked from [math]\displaystyle{ C }[/math] in linear time.

So how can we select such elements [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] from [math]\displaystyle{ S }[/math]? Certainly sorting [math]\displaystyle{ S }[/math] would give us the elements, but isn't that exactly what we want to avoid in the first place?

Observe that [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] are only asked to roughly satisfy some constraints. This hints us maybe we can construct a sketch of [math]\displaystyle{ S }[/math] which is small enough to sort cheaply and roughly represents [math]\displaystyle{ S }[/math], and then pick [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] from this sketch. We construct the sketch by randomly sampling a relatively small number of elements from [math]\displaystyle{ S }[/math]. Then the strategy of algorithm is outlined by:

- Sample a set [math]\displaystyle{ R }[/math] of elements from [math]\displaystyle{ S }[/math].

- Sort [math]\displaystyle{ R }[/math] and choose [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] somewhere around the median of [math]\displaystyle{ R }[/math].

- If [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] have the desirable properties, we can compute the median in linear time, or otherwise the algorithm fails.

The parameters to be fixed are: the size of [math]\displaystyle{ R }[/math] (small enough to sort in linear time and large enough to contain sufficient information of [math]\displaystyle{ S }[/math]); and the order of [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] in [math]\displaystyle{ R }[/math] (not too close to have [math]\displaystyle{ m }[/math] between them, and not too far away to have [math]\displaystyle{ C }[/math] sortable in linear time).

We choose the size of [math]\displaystyle{ R }[/math] as [math]\displaystyle{ n^{3/4} }[/math], and [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math] are within [math]\displaystyle{ \sqrt{n} }[/math] range around the median of [math]\displaystyle{ R }[/math].

| Randomized Median Algorithm: |

Input: a set [math]\displaystyle{ S }[/math] of [math]\displaystyle{ n }[/math] elements over totally ordered domain.

|

"Sample with replacement" (有放回采样) means that after sampling an element, we put the element back to the set. In this way, each sampled element is independently and identically distributed (i.i.d) (独立同分布). In the above algorithm, this is for our convenience of analysis.

Analysis

The algorithm always terminates in linear time because each line of the algorithm costs at most linear time. The last three line guarantees that the algorithm returns the correct median if it does not fail.

We then only need to bound the probability that the algorithm returns a FAIL. Let [math]\displaystyle{ m\in S }[/math] be the median of [math]\displaystyle{ S }[/math]. By Line 4, we know that the algorithm returns a FAIL if and only if at least one of the following events occurs:

- [math]\displaystyle{ \mathcal{E}_1: Y=|\{x\in R\mid x\le m\}|\lt \frac{1}{2}n^{3/4}-\sqrt{n} }[/math];

- [math]\displaystyle{ \mathcal{E}_2: Z=|\{x\in R\mid x\ge m\}|\lt \frac{1}{2}n^{3/4}-\sqrt{n} }[/math];

- [math]\displaystyle{ \mathcal{E}_3: |C|\gt 4n^{3/4} }[/math].

[math]\displaystyle{ \mathcal{E}_3 }[/math] directly follows the third condition in Line 4. [math]\displaystyle{ \mathcal{E}_1 }[/math] and [math]\displaystyle{ \mathcal{E}_2 }[/math] are a bit tricky. The first condition in Line 4 is that [math]\displaystyle{ r_d\gt \frac{n}{2} }[/math], which looks not exactly the same as [math]\displaystyle{ \mathcal{E}_1 }[/math], but both [math]\displaystyle{ \mathcal{E}_1 }[/math] and that [math]\displaystyle{ r_d\gt \frac{n}{2} }[/math] are equivalent to the same event: the [math]\displaystyle{ \left\lfloor\frac{1}{2}n^{3/4}-\sqrt{n}\right\rfloor }[/math]-th smallest element in [math]\displaystyle{ R }[/math] is greater than [math]\displaystyle{ m }[/math], thus they are actually equivalent. Similarly, [math]\displaystyle{ \mathcal{E}_2 }[/math] is equivalent to the second condition of Line 4.

We now bound the probabilities of these events one by one.

Lemma 1

|

Proof: Let [math]\displaystyle{ X_i }[/math] be the [math]\displaystyle{ i }[/math]th sampled element in Line 1 of the algorithm. Let [math]\displaystyle{ Y_i }[/math] be a indicator random variable such that

- [math]\displaystyle{ Y_i= \begin{cases} 1 & \mbox{if }X_i\le m,\\ 0 & \mbox{otherwise.} \end{cases} }[/math]

It is obvious that [math]\displaystyle{ Y=\sum_{i=1}^{n^{3/4}}Y_i }[/math], where [math]\displaystyle{ Y }[/math] is as defined in [math]\displaystyle{ \mathcal{E}_1 }[/math]. For every [math]\displaystyle{ X_i }[/math], there are [math]\displaystyle{ \left\lceil\frac{n}{2}\right\rceil }[/math] elements in [math]\displaystyle{ S }[/math] that are less than or equal to the median. The probability that [math]\displaystyle{ Y_i=1 }[/math] is

- [math]\displaystyle{ p=\Pr[Y_i=1]=\Pr[X_i\le m]=\frac{1}{n}\left\lceil\frac{n}{2}\right\rceil, }[/math]

which is within the range of [math]\displaystyle{ \left[\frac{1}{2},\frac{1}{2}+\frac{1}{2n}\right] }[/math]. Thus

- [math]\displaystyle{ \mathbf{E}[Y]=n^{3/4}p\ge \frac{1}{2}n^{3/4}. }[/math]

The event [math]\displaystyle{ \mathcal{E}_1 }[/math] is defined as that [math]\displaystyle{ Y\lt \frac{1}{2}n^{3/4}-\sqrt{n} }[/math].

Note that [math]\displaystyle{ Y_i }[/math]'s are Bernoulli trials, and [math]\displaystyle{ Y }[/math] is the sum of [math]\displaystyle{ n^{3/4} }[/math] Bernoulli trials, which follows binomial distribution with parameters [math]\displaystyle{ n^{3/4} }[/math] and [math]\displaystyle{ p }[/math]. Thus, the variance is

- [math]\displaystyle{ \mathbf{Var}[Y]=n^{3/4}p(1-p)\le \frac{1}{4}n^{3/4}. }[/math]

Applying Chebyshev's inequality,

- [math]\displaystyle{ \begin{align} \Pr[\mathcal{E}_1] &= \Pr\left[Y\lt \frac{1}{2}n^{3/4}-\sqrt{n}\right]\\ &\le \Pr\left[|Y-\mathbf{E}[Y]|\gt \sqrt{n}\right]\\ &\le \frac{\mathbf{Var}[Y]}{n}\\ &\le\frac{1}{4}n^{-1/4}. \end{align} }[/math]

[math]\displaystyle{ \square }[/math]

By a similar analysis, we can obtain the following bound for the event [math]\displaystyle{ \mathcal{E}_2 }[/math].

Lemma 2

|

We now bound the probability of the event [math]\displaystyle{ \mathcal{E}_3 }[/math].

Lemma 3

|

Proof: The event [math]\displaystyle{ \mathcal{E}_3 }[/math] is defined as that [math]\displaystyle{ |C|\gt 4 n^{3/4} }[/math], which by the Pigeonhole Principle, implies that at leas one of the following must be true:

- [math]\displaystyle{ \mathcal{E}_3' }[/math]: at least [math]\displaystyle{ 2n^{3/4} }[/math] elements of [math]\displaystyle{ C }[/math] is greater than [math]\displaystyle{ m }[/math];

- [math]\displaystyle{ \mathcal{E}_3'' }[/math]: at least [math]\displaystyle{ 2n^{3/4} }[/math] elements of [math]\displaystyle{ C }[/math] is smaller than [math]\displaystyle{ m }[/math].

We bound the probability that [math]\displaystyle{ \mathcal{E}_3' }[/math] occurs; the second will have the same bound by symmetry.

Recall that [math]\displaystyle{ C }[/math] is the region in [math]\displaystyle{ S }[/math] between [math]\displaystyle{ d }[/math] and [math]\displaystyle{ u }[/math]. If there are at least [math]\displaystyle{ 2n^{3/4} }[/math] elements of [math]\displaystyle{ C }[/math] greater than the median [math]\displaystyle{ m }[/math] of [math]\displaystyle{ S }[/math], then the rank of [math]\displaystyle{ u }[/math] in the sorted order of [math]\displaystyle{ S }[/math] must be at least [math]\displaystyle{ \frac{1}{2}n+2n^{3/4} }[/math] and thus [math]\displaystyle{ R }[/math] has at least [math]\displaystyle{ \frac{1}{2}n^{3/4}-\sqrt{n} }[/math] samples among the [math]\displaystyle{ \frac{1}{2}n-2n^{3/4} }[/math] largest elements in [math]\displaystyle{ S }[/math].

Let [math]\displaystyle{ X_i\in\{0,1\} }[/math] indicate whether the [math]\displaystyle{ i }[/math]th sample is among the [math]\displaystyle{ \frac{1}{2}n-2n^{3/4} }[/math] largest elements in [math]\displaystyle{ S }[/math]. Let [math]\displaystyle{ X=\sum_{i=1}^{n^{3/4}}X_i }[/math] be the number of samples in [math]\displaystyle{ R }[/math] among the [math]\displaystyle{ \frac{1}{2}n-2n^{3/4} }[/math] largest elements in [math]\displaystyle{ S }[/math]. It holds that

- [math]\displaystyle{ p=\Pr[X_i=1]=\frac{\frac{1}{2}n-2n^{3/4}}{n}=\frac{1}{2}-2n^{-1/4} }[/math].

[math]\displaystyle{ X }[/math] is a binomial random variable with

- [math]\displaystyle{ \mathbf{E}[X]=n^{3/4}p=\frac{1}{2}n^{3/4}-2\sqrt{n}, }[/math]

and

- [math]\displaystyle{ \mathbf{Var}[X]=n^{3/4}p(1-p)=\frac{1}{4}n^{3/4}-4n^{1/4}\lt \frac{1}{4}n^{3/4}. }[/math]

Applying Chebyshev's inequality,

- [math]\displaystyle{ \begin{align} \Pr[\mathcal{E}_3'] &= \Pr\left[X\ge\frac{1}{2}n^{3/4}-\sqrt{n}\right]\\ &\le \Pr\left[|X-\mathbf{E}[X]|\ge\sqrt{n}\right]\\ &\le \frac{\mathbf{Var}[X]}{n}\\ &\le\frac{1}{4}n^{-1/4}. \end{align} }[/math]

Symmetrically, we have that [math]\displaystyle{ \Pr[\mathcal{E}_3'']\le\frac{1}{4}n^{-1/4} }[/math].

Applying the union bound

- [math]\displaystyle{ \Pr[\mathcal{E}_3]\le \Pr[\mathcal{E}_3']+\Pr[\mathcal{E}_3'']\le\frac{1}{2}n^{-1/4}. }[/math]

[math]\displaystyle{ \square }[/math]

Combining the three bounds. Applying the union bound to them, the probability that the algorithm returns a FAIL is at most

- [math]\displaystyle{ \Pr[\mathcal{E}_1]+\Pr[\mathcal{E}_2]+\Pr[\mathcal{E}_3]\le n^{-1/4}. }[/math]

Therefore the algorithm always terminates in linear time and returns the correct median with high probability.

Chernoff Bound

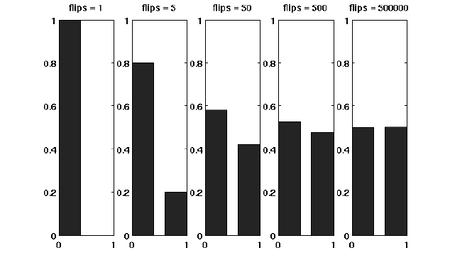

Suppose that we have a fair coin. If we toss it once, then the outcome is completely unpredictable. But if we toss it, say for 1000 times, then the number of HEADs is very likely to be around 500. This striking phenomenon, illustrated in the right figure, is called the concentration. The Chernoff bound captures the concentration of independent trials.

The Chernoff bound is also a tail bound for the sum of independent random variables which may give us exponentially sharp bounds.

Before proving the Chernoff bound, we should talk about the moment generating functions.

Moment generating functions

The more we know about the moments of a random variable [math]\displaystyle{ X }[/math], the more information we would have about [math]\displaystyle{ X }[/math]. There is a so-called moment generating function, which "packs" all the information about the moments of [math]\displaystyle{ X }[/math] into one function.

Definition:

|

By Taylor's expansion and the linearity of expectations,

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[\mathrm{e}^{\lambda X}\right] &= \mathbf{E}\left[\sum_{k=0}^\infty\frac{\lambda^k}{k!}X^k\right]\\ &=\sum_{k=0}^\infty\frac{\lambda^k}{k!}\mathbf{E}\left[X^k\right] \end{align} }[/math]

The moment generating function [math]\displaystyle{ \mathbf{E}\left[\mathrm{e}^{\lambda X}\right] }[/math] is a function of [math]\displaystyle{ \lambda }[/math].

The Chernoff bound

The Chernoff bounds are tail inequalities with exponential decays for the sum of independent trials. The bounds are obtained by applying Markov's inequality to the moment generating function of the sum of independent trials, with some appropriate choice of the parameter [math]\displaystyle{ \lambda }[/math].

Chernoff bound (the upper tail):

|

Proof: For any [math]\displaystyle{ \lambda\gt 0 }[/math], [math]\displaystyle{ X\ge (1+\delta)\mu }[/math] is equivalent to that [math]\displaystyle{ e^{\lambda X}\ge e^{\lambda (1+\delta)\mu} }[/math], thus

- [math]\displaystyle{ \begin{align} \Pr[X\ge (1+\delta)\mu] &= \Pr\left[e^{\lambda X}\ge e^{\lambda (1+\delta)\mu}\right]\\ &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1+\delta)\mu}}, \end{align} }[/math]

where the last step follows by Markov's inequality.

Computing the moment generating function [math]\displaystyle{ \mathbf{E}[e^{\lambda X}] }[/math]:

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X}\right] &= \mathbf{E}\left[e^{\lambda \sum_{i=1}^n X_i}\right]\\ &= \mathbf{E}\left[\prod_{i=1}^n e^{\lambda X_i}\right]\\ &= \prod_{i=1}^n \mathbf{E}\left[e^{\lambda X_i}\right]. & (\mbox{for independent random variables}) \end{align} }[/math]

Let [math]\displaystyle{ p_i=\Pr[X_i=1] }[/math] for [math]\displaystyle{ i=1,2,\ldots,n }[/math]. Then,

- [math]\displaystyle{ \mu=\mathbf{E}[X]=\mathbf{E}\left[\sum_{i=1}^n X_i\right]=\sum_{i=1}^n\mathbf{E}[X_i]=\sum_{i=1}^n p_i }[/math].

We bound the moment generating function for each individual [math]\displaystyle{ X_i }[/math] as follows.

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X_i}\right] &= p_i\cdot e^{\lambda\cdot 1}+(1-p_i)\cdot e^{\lambda\cdot 0}\\ &= 1+p_i(e^\lambda -1)\\ &\le e^{p_i(e^\lambda-1)}, \end{align} }[/math]

where in the last step we apply the Taylor's expansion so that [math]\displaystyle{ e^y\ge 1+y }[/math] where [math]\displaystyle{ y=p_i(e^\lambda-1)\ge 0 }[/math]. (By doing this, we can transform the product to the sum of [math]\displaystyle{ p_i }[/math], which is [math]\displaystyle{ \mu }[/math].)

Therefore,

- [math]\displaystyle{ \begin{align} \mathbf{E}\left[e^{\lambda X}\right] &= \prod_{i=1}^n \mathbf{E}\left[e^{\lambda X_i}\right]\\ &\le \prod_{i=1}^n e^{p_i(e^\lambda-1)}\\ &= \exp\left(\sum_{i=1}^n p_i(e^{\lambda}-1)\right)\\ &= e^{(e^\lambda-1)\mu}. \end{align} }[/math]

Thus, we have shown that for any [math]\displaystyle{ \lambda\gt 0 }[/math],

- [math]\displaystyle{ \begin{align} \Pr[X\ge (1+\delta)\mu] &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1+\delta)\mu}}\\ &\le \frac{e^{(e^\lambda-1)\mu}}{e^{\lambda (1+\delta)\mu}}\\ &= \left(\frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}}\right)^\mu \end{align} }[/math].

For any [math]\displaystyle{ \delta\gt 0 }[/math], we can let [math]\displaystyle{ \lambda=\ln(1+\delta)\gt 0 }[/math] to get

- [math]\displaystyle{ \Pr[X\ge (1+\delta)\mu]\le\left(\frac{e^{\delta}}{(1+\delta)^{(1+\delta)}}\right)^{\mu}. }[/math]

[math]\displaystyle{ \square }[/math]

The idea of the proof is actually quite clear: we apply Markov's inequality to [math]\displaystyle{ e^{\lambda X} }[/math] and for the rest, we just estimate the moment generating function [math]\displaystyle{ \mathbf{E}[e^{\lambda X}] }[/math]. To make the bound as tight as possible, we minimized the [math]\displaystyle{ \frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}} }[/math] by setting [math]\displaystyle{ \lambda=\ln(1+\delta) }[/math], which can be justified by taking derivatives of [math]\displaystyle{ \frac{e^{(e^\lambda-1)}}{e^{\lambda (1+\delta)}} }[/math].

We then proceed to the lower tail, the probability that the random variable deviates below the mean value:

Chernoff bound (the lower tail):

|

Proof: For any [math]\displaystyle{ \lambda\lt 0 }[/math], by the same analysis as in the upper tail version,

- [math]\displaystyle{ \begin{align} \Pr[X\le (1-\delta)\mu] &= \Pr\left[e^{\lambda X}\ge e^{\lambda (1-\delta)\mu}\right]\\ &\le \frac{\mathbf{E}\left[e^{\lambda X}\right]}{e^{\lambda (1-\delta)\mu}}\\ &\le \left(\frac{e^{(e^\lambda-1)}}{e^{\lambda (1-\delta)}}\right)^\mu. \end{align} }[/math]

For any [math]\displaystyle{ 0\lt \delta\lt 1 }[/math], we can let [math]\displaystyle{ \lambda=\ln(1-\delta)\lt 0 }[/math] to get

- [math]\displaystyle{ \Pr[X\ge (1-\delta)\mu]\le\left(\frac{e^{-\delta}}{(1-\delta)^{(1-\delta)}}\right)^{\mu}. }[/math]

[math]\displaystyle{ \square }[/math]

Some useful special forms of the bounds can be derived directly from the above general forms of the bounds. We now know better why we say that the bounds are exponentially sharp.

Useful forms of the Chernoff bound

|

Proof: To obtain the bounds in (1), we need to show that for [math]\displaystyle{ 0\lt \delta\lt 1 }[/math], [math]\displaystyle{ \frac{e^{\delta}}{(1+\delta)^{(1+\delta)}}\le e^{-\delta^2/3} }[/math] and [math]\displaystyle{ \frac{e^{-\delta}}{(1-\delta)^{(1-\delta)}}\le e^{-\delta^2/2} }[/math]. We can verify both inequalities by standard analysis techniques.

To obtain the bound in (2), let [math]\displaystyle{ t=(1+\delta)\mu }[/math]. Then [math]\displaystyle{ \delta=t/\mu-1\ge 2e-1 }[/math]. Hence,

- [math]\displaystyle{ \begin{align} \Pr[X\ge(1+\delta)\mu] &\le \left(\frac{e^\delta}{(1+\delta)^{(1+\delta)}}\right)^\mu\\ &\le \left(\frac{e}{1+\delta}\right)^{(1+\delta)\mu}\\ &\le \left(\frac{e}{2e}\right)^t\\ &\le 2^{-t} \end{align} }[/math]

[math]\displaystyle{ \square }[/math]

Applications of Chernoff Bounds

We now introduce some applications of Chernoff bounds in randomized algorithms.

Balls into bins

Throwing [math]\displaystyle{ m }[/math] balls uniformly and independently to [math]\displaystyle{ n }[/math] bins, what is the maximum load of all bins? In the last class, by using a counting argument, we proved that for the case that [math]\displaystyle{ m=n }[/math], the maximum load is [math]\displaystyle{ O(\ln n\ln\ln n) }[/math] with high probability. Now we show that when there are more balls, the loads are more balanced.

For any [math]\displaystyle{ i\in[n] }[/math] and [math]\displaystyle{ j\in[m] }[/math], let [math]\displaystyle{ X_{ij} }[/math] be the indicator variable for the event that ball [math]\displaystyle{ j }[/math] is thrown to bin [math]\displaystyle{ i }[/math]. Obviously

- [math]\displaystyle{ \mathbf{E}[X_{ij}]=\Pr[\mbox{ball }j\mbox{ is thrown to bin }i]=\frac{1}{n} }[/math]

Let [math]\displaystyle{ Y_i=\sum_{j\in[m]}X_{ij} }[/math] be the load of bin [math]\displaystyle{ i }[/math].

Let us consider the case when [math]\displaystyle{ m=6n\ln n }[/math]. Then the expected load of bin [math]\displaystyle{ i }[/math] is

- [math]\displaystyle{ \mu=\mathbf{E}[Y_i]=\mathbf{E}\left[\sum_{j\in[m]}X_{ij}\right]=\sum_{j\in[m]}\mathbf{E}[X_{ij}]=m/n=6\ln n }[/math].

Note that [math]\displaystyle{ Y_i }[/math] is a sum of [math]\displaystyle{ m }[/math] mutually independent indicator variable. Applying Chernoff bound, for any particular bin [math]\displaystyle{ i\in[n] }[/math],

- [math]\displaystyle{ \Pr[Y_i\gt 12\ln n] =\Pr[Y_i\gt (1+1)\mu]\le e^{-\frac{\mu}{3}} = e^{-2\ln n}= \frac{1}{n^2}. }[/math]

Applying the union bound, the probability that there exists a bin with load [math]\displaystyle{ \gt 12\ln n }[/math] is

- [math]\displaystyle{ n\cdot \Pr[Y_1\gt 12\ln n]\le \frac{1}{n} }[/math].

Therefore, with probability at least [math]\displaystyle{ 1-\frac{1}{n} }[/math], the maximum load is within [math]\displaystyle{ 12\ln n=O(m/n) }[/math].